Scraping large amount of data can lead you to a big mistake. Here is why, and how to avoid it.

In my recent “quiz” about technology, I mentioned that finding usable data buried under mountains of useless one, the activity called “data-mining”, was a perfect application for artificial intelligence and especially neural networks.

But I also mentioned that this activity might also present a huge caveat, a logical bias that we should all be aware of. This bias, very unsurprisingly named “data-mining bias”, is what I want to talk about today.

So let’s talk about this scary “data-mining bias”.

It might sound like something that is very technical and that can only concern geeks. But it is not. It is a very common mistake that everybody is faced with everyday. Me, you, everyone.

The data-mining process is actually a perfectly valid methodology which consists in scraping large amount of data in order to find correlations, time-series, trends, similarities… And *well practiced*, with great caution and self awareness, this can indeed lead to useful discoveries.

But is can also lead to a big mistake. Here is why.

But first let’s illustrate it. Here are some examples

We need to illustrate this in order to have real-world, non nerdy examples to work with, to understand why you should read the end of this post !

First example : journalists sensationalism

Journalists need to scare us, to make us believe everyday that something worth printing about is happening. Sometime there is, but sometime there is really not.

This is especially true when they are reporting, as an example among many others, “extreme” weather conditions.

This is indeed a topic in which they are very often subject to the dreaded data-mining bias. To make up the headlines they go deep into history or geography until they find something to compare the current weather to, making it look “historical” and scare us with something like : “it is so cold out there, proof : is has never been this cold in this very specific county a 17th of November for 23 years, oh my god what is happening WE ARE ALL DOOMED”.

This is “data-mining bias ” 101. I you are a journalist stop doing that. Stop scraping historical data to find anything that might make present spectacular when it is really not. Extreme weather happens, it is normal. It might indeed happen more often than normal recently due to global warming, true, but a single event is not enough to prove anything about it. And this is of course not limited to weather reports: crime rates growing in very limited neighborhood, bloated immigration numbers from specific regions, number of shark attacks on a specific beach… Anything that might scare the public is potentially subject to the bias.

Second example : Weird medical correlations

We can often hear about “medical studies” that “prove” that consuming a certain product give you a high correlation of dying in horrible pain or at the contrary to get younger and even more handsome. They always present very surprising, even weird relations that are found by obscure research teams on very specific subsets of data.

To be perfectly honest, as I am not being naive here, most of those studies are actually lobby financed of course, and while they might actually find data that “prove” what they want to prove, they are dishonest as they know that this does not prove much and hide it.

Here is a textbook example of this : how coffee can make you live longer !

Another one : two days ago my wife tried to convince me that I should stop having my “back home” beer before diner. What she first-link-found on Google did not please her as she stepped into a beer-industry financed campaign based on a study that “proved” that beer was good for our health…

I cowardly tried to use this “research” to validate my vice, but she was not duped. She naturally busted the data-mining bias that was not so subtlety hidden in the study results. There no back-home beer for me anymore…

Look at how those studies limit themselves to very specific samples… this is how you detect the bias, be it voluntary or not. The thing is, by limiting the samples and separating them in limited subsets or “observations” you have a very good probability to find what you want.

Third example : investment “backtesting”

Often, when trying to design an investment strategy, analysts use past market data, be it price or fundamental data, and scrape this data to the bone in order to find the world most famous investment relation :

” If this happens at N, then price is up at N+1, then I get incredibly rich with no pain at N+2″

And they always find such a relation. Always. On paper. They are all looking for the investment martingale ( don’t waste your time and your money this relation does not exists, investment is hard, never gets easier and requires hard work and patience ).

But when they decide to test this in real life ( and I must confess I did ! ) it does not work and they lose money ( and I did, but this is how you learn in the market ).

OK but why are those errors ?

So what is really this damn data-mining bias you are winning about ?

Data-mining is scraping large amount of data in order to find useful information.

Data-mining *bias* is the error to believe that everything you find while data-mining is significant (i.e. non completely pure luck )

The thing is : if you have enough data you will always find “something”. This is statistical truth, not opinion. Maths. There is always someone wining the national lottery. Even if the return distribution is absolutely random, if you try long enough, a very improbable event will happen. There will be a lottery winner, or you will find a correlation that very much looks like not random.

This video shows a very classical “Galton board” which is the best way to image the normal distribution.

As you can see, there are always balls falling to the extremity of the board. It is very improbable but it happens every time because there is so many balls falling. So if you look at those “extreme” balls only, without looking at the rest, you might want to say : “this cannot be random, there is something wrong with the board”.

The main fallacy of the data mining bias is to believe this :

“If it is very improbable, and still happens, then it is not random”

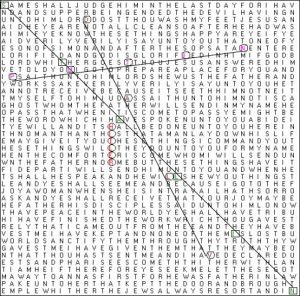

Another good example would be the Nostradamus book’s prophecy trick : if you put all letter of a book in a matrix for each page and search for words in all directions you will end up with a colossal number of words from which you can choose. Here is an example below:

This is “supposedly” a single page from the bible from which a especially creative conspirationist (not me) found “hidden” names. The fact that those very related words are available on the same page is very improbable indeed, however this is pure luck. If you take enough bible pages and analyze them this way you WILL find something that might look like this. There is just so many possible word combinations that it will happen.

If, at the opposite, you try to look for a very specific text on one page you will probably never find it, because it is actually almost pure randomness.

Randomness is often very badly understood. In fact randomness can and must look very very much not random. The best illustration of this is this classic test:

If you were asked to artificially create a “totally random” series of 1.000 head or tails flipping results, you will probably do a very poor job at it (so would I). Noticeably there is a good chance that you will fail to put long enough series of same side flips ( for example 4, 5 or 6 tails in a row), because it will look very NOT random to you.

But it will happen. Statistically if the series is really random it will happen and you should insert long series of same side flips.

Data-mining bias is fueled by 2 errors

The data-mining bias is actually very closely linked to 2 other very important cognitive biases that you should be aware of :

1 – Confusion between correlation and causality. Series of data that are correlated together in a data set can actually have no real world link between them. Correlation that are purely the result of random noise in the data are called “spurious correlations”.

But been correlated does not mean that there is a real-world link, a “causality”, that will hold in other data sets (the future notably). A link that says and verifies : if A goes up then B will go up too in the future”.

If you want good and fun examples of this you can go there. A website gathering spurious correlations that are completely absurd. You will learn for example how US deaths by steam and hot objects used to be strongly correlated with the age of miss America…

2 – Confirmation bias, is even more disturbing : it consists in unconsciously ( if it consciously then it is pure dishonesty and not a bias ) discarding any evidence that is not supporting your preconceived opinion and only keeping evidence that match what you want to find.

Think about it when you search something backing your opinion though a Google search in order to prove your mother-in-law that she is wrong. You will have a natural tendency to overlook the links whose title don’t match your opinion and go directly to links that do. But she IS wrong right ?

So what can I do to prevent this ?

Well first, you have to be aware of it. This should be okay now that you thoroughly read this post (thanks!).

Second, and following this, you should keep extremely prudent when analyzing large data set or listening to studies that analyzed large data set. Keep in mind that you could be fooled by others as well as by yourself.

Last you should always check your finding through what is called “out of sample analysis”. The logic of this is to search for correlations, times-series, well any kind of relation that might have value for you in one limited set of data, but to always verify that it stands in another, totally separate set of data.

For time-series this would mean something like “if I found that the growth of American beaver population has grown alongside the number of car sold in Japan from 1980 to 1990, did that relation hold during the next 10 years ?”.

This will in most case show you that it does not stand the test of time. But sometimes it will and you might then have found something interesting.

One thought on “Beware of the vicious data-mining bias !”